Remember with vSphere 5.5 you MUST use the web client to fully configure the VM properties, including hardware version 10. So while I show the legacy C# client for 5.1, please go to your 5.5 web client to do all of the configuration. The VI client is officially a very lame duck in 5.5 and will be a dodo bird next year.

Blog Series

SQL 2012 Failover Cluster Pt. 1: Introduction

SQL 2012 Failover Cluster Pt. 2: VM Deployment

SQL 2012 Failover Cluster Pt. 3: iSCSI Configuration

SQL 2012 Failover Cluster Pt. 4: Cluster Creation

SQL 2012 Failover Cluster Pt. 5: Service Accounts

SQL 2012 Failover Cluster Pt. 6: Node A SQL Install

SQL 2012 Failover Cluster Pt. 7: Node B SQL Install

SQL 2012 Failover Cluster Pt. 8: Windows Firewall

SQL 2012 Failover Cluster Pt. 9: TempDB

SQL 2012 Failover Cluster Pt. 10: Email & RAM

SQL 2012 Failover Cluster Pt. 11: Jobs N More

SQL 2012 Failover Cluster Pt. 12: Kerberos n SSL

Virtual Hardware

Selecting the right virtual hardware is important for the best performance. Here the major food groups are memory, compute, networking, and storage.

Memory

Memory configuration is very dependent on the SQL workload and your applications. In order for SQL server to use large page tables (can help performance) the VM needs at least 8GB of RAM. In general I don’t like memory reservations, but for SQL servers I DO reserve 100% amount of the RAM, so we have guaranteed memory performance. In my small lab I configured 5GB of RAM, but for production I’d do 8GB or more.

vCPUs

vCPUs is also very dependent on the workload, and remember not to over allocate vCPUs as it can negatively impact performance. For this lab I did one vCPU, but in production I’d do two minimum then monitor. I do like configuring hot add of RAM and CPU, so that I can dynamically add more resources with zero downtime. So let’s turn that on now, to head off future downtime to turn that feature on.

Networking

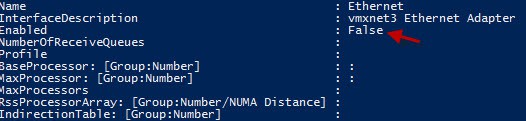

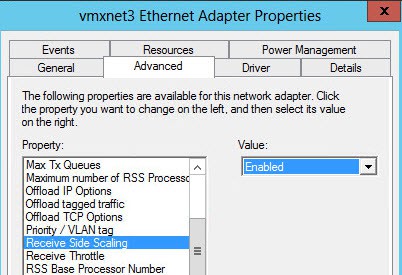

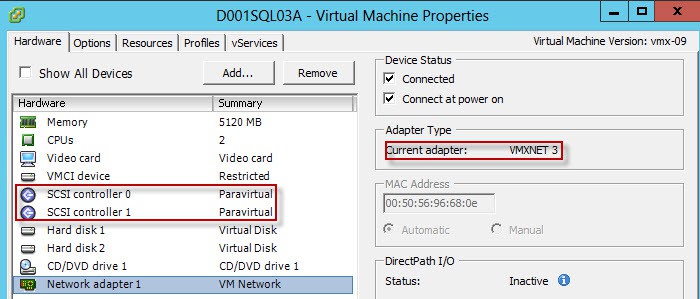

Networking is pretty straight forward, and I always, always use the VMXNET3 adapter for Windows VMs. ‘Nuff said. Just do it. But, what is NOT enabled by default on the VMXNET3 driver is RSS, or Receive Side Scaling. This can help distribute the network I/O load across multiple vCPUs. You enable this inside the guest VM (see below).

Storage

I’m also a pvscsi fan, and only for VDI do I use the SAS controller. Since SQL servers can have heavy IO, I always use the pvscsi controller. I have a blog article on how to inject the VMware drivers into your Windows install ISO, here. Or you can use MDT and inject them that way, which is my new preferred method.

The boot drive size may be a slight point of contention. My philosophy is that the C drive is for the OS, patches, and the generally the swap file. It should stay lean, not grow very much, and usually be in the 40-50GB range, at most. SQL binaries should all be installed on a separate drive, so about the only thing using up C space are patches, OS logs, and OS temp files. Those do not exponentially grow, so it’s silly to overprovision the C drive. To fully support clustering you should use EZT disks (eager zeroed thick), vice thin or lazy zeroed disks.

Another important factor to keep in mind is spreading the virtual disks across multiple pvscsi controllers. If you try and shove all your I/Os through a single virtual pvscsi card you will likely be disappointed. You can have up to four pvscsi cards per VM. In an AlwaysOn cluster with no shared LUNs, I distribute the multiple disks (database, log, tempDB, etc.) across all four controllers. With our MSCS config here, the heavy I/O is going to the in-guest iSCSI targets, so it’s less of a concern. But I would still put the D drive on a second pvscsi card. Let’s go for a 10GB D drive to house the SQL binaries.

Hardware Summary

If you got tired of reading through my design choice reasoning, here’s a quick recap of the decision highlights:

- Use hardware version 10, when deploying on vSphere 5.5

- Allocate 8GB or more to the VM so you can use large page tables

- Reserve all VM memory to prevent unexpected swapping

- Don’t over-allocate vCPUs

- Configure hot add/plug of vCPUs and memory for future non-disruptive expansion

- Use VMXNET3 NIC

- Use two pvscsi controllers, one for C one for D

- Use eager zero thick disks

- Remove unnecessary hardware like floppy drives

Deployment

Be sure to deploy your VMs using customization specifications, so that sysprep can run. Naming convention is of course up to you, but I like something along the lines of SQL01A and SQL01B, so you can quickly identify they are a pair. I prefix that with network/location name as well. For my lab we will use D001SQL03A and D001SQL03B. At this point you should now have two VMs, configured the same, and following the general best practices outlined above. A few guest OS tweaks are now in order.

1. Network

Now we need to enable RSS on the VMXNET3 NIC. If you open a Powershell command prompt and type get-NetAdapterRSS you will see that it is NOT enabled by default for the NIC (it’s enabled globally in the OS though).  To enable RSS inside the VM, just open the VMXNET3 properties and scroll down to Receive Side Scaling and enable it.

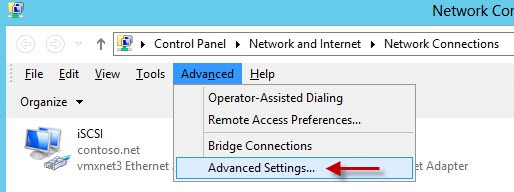

To enable RSS inside the VM, just open the VMXNET3 properties and scroll down to Receive Side Scaling and enable it.  We also need to change the binding order of the NICs, or the SQL installer will possibly complain. Open the Network Connections, press the ALT key once (do NOT hold it), then from the magically appearing Advanced menu select Advanced Settings.

We also need to change the binding order of the NICs, or the SQL installer will possibly complain. Open the Network Connections, press the ALT key once (do NOT hold it), then from the magically appearing Advanced menu select Advanced Settings.

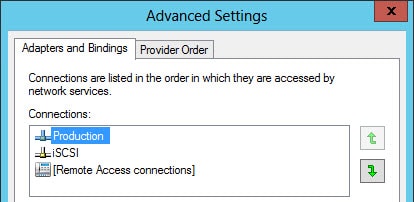

Put your “Production” NIC first, and the iSCSI NIC second.

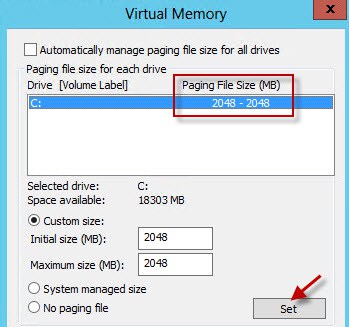

2. Swap File

Since you should be properly sizing your SQL VM’s memory to match the workload, it should never be swapping to disk. And SQL servers can chew up a lot of memory, so letting Windows manage the page file can result in huge page files that waste disk space. I generally configure a fixed 2GB page file.

3. Performance Counters

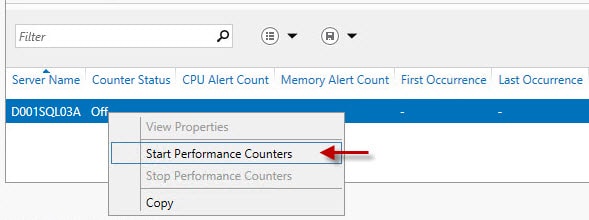

Windows Server 2012 has some built-in basic performance counters, which I always like to start. So on the Server Manager “Local Server” page scroll down to the PERFORMANCE section and start the counters.

Summary

And that’s it for the base VM configuration. As you can see, this is not just a click-next VM deployment. There are probably some additional best practices that could be applied to the SQL VM, but I think these are the major ones that I’ve come across and use on a daily basis. If you have additional suggestions for VM tweaks, please leave them in the comments.

What’s next? Configuring your iSCSI LUNs and multi-pathing. Check out Part 3 here.

thnx

http://kb.vmware.com/selfservice/microsites/searc…

There are Vmware said – If a virtual machine uses PVSCSI, it cannot be part of a Microsoft Cluster Server (MSCS) cluster.

i would like to know what is the best practice for SQL 2012 fail-over clustering for shared disks using RMD-Physical mode or in-guest iSCSI.

you didn't tell how did u create the shared disks.

this is the first SQL fail-over cluster in a virtual environment for me. and thank you for your help

2014 FIFA World Cup Discount Of Coach

more from :Coach bags cheapest price