Requirements

For my home labs I’m very picky about size, power, and somewhat sensitive about cost. CPU performance has never been a problem for my home VMs, so I focus more on memory and quality parts than springing for hyperthreading, high clock speeds, or overclocking. I like the micro-ATX form factor for ESXi servers, so that’s also on my list of requirements.

Processor

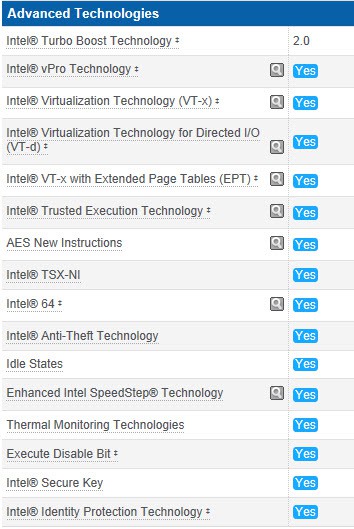

Processor selection is very important though, as Intel differentiates their SKUs by both feature set, clock speed, thermal ratings, and other factors. For full virtualization support I want a ‘pimped out’ CPU with VT-x, VT-d, VT-x with extended page tables, AES-NI, and TXT-NI. I also want built-in graphics, so that I don’t need a graphics card which is power hungry, eats up a slot, and cost more money.

After reviewing the current Intel Haswell choices and checking my $200 CPU budget (which I find is a good sweet spot for ESXi hosts), I decided my ticket to paradise is a Intel i5-4570s. It has all advanced technologies, sports four cores, 2.9GHz, and 65w TPD. If you want to go for broke, then the i7-4770s is basically the same processor but hyperthreaded and 3.1 GHz. It costs 50% more at $300, which I don’t feel is worth it for my VMs.

Memory

As I’m sure you know, you can never have enough memory when virtualizing your environment. The consumer Intel chipset and CPUs max out at 32GB, so I’m going to run the maximum configuration. What can be tricky is finding low profile memory, since I’ll be using a super slim case for my server.

As I’m sure you know, you can never have enough memory when virtualizing your environment. The consumer Intel chipset and CPUs max out at 32GB, so I’m going to run the maximum configuration. What can be tricky is finding low profile memory, since I’ll be using a super slim case for my server.

To that end I needed to find 4 sticks of 8GB memory, which is commonly sold in 16GB dual-DIMM sets. The Haswell chipset supports 1600MHz memory, so that’s the minimum that I wanted to buy. For ESXi servers I’m not into overclocking, as that wastes power. The G.Skill F3-1866C10D-16GAB seemed like the ideal solution, at $126 a pair. I’d need two pairs for 32GB. It’s rated at 1866 MHz, so I have plenty of headroom.

Motherboard

I’ve always had exceptionally good experiences with Asus motherboard, so they are my go-to vendor when shopping for a new whitebox system. One factor you have to be very careful about is the onboard NIC(s). ESXi doesn’t have the broad NIC support that Windows enjoys, so you need to pay attention. I always get a dual NIC PCIe card, but I like having three active NICs.

I’ve always had exceptionally good experiences with Asus motherboard, so they are my go-to vendor when shopping for a new whitebox system. One factor you have to be very careful about is the onboard NIC(s). ESXi doesn’t have the broad NIC support that Windows enjoys, so you need to pay attention. I always get a dual NIC PCIe card, but I like having three active NICs.

The latest Intel chipset is the Z87. So clearly, I’m not going with yesteryear’s technology and getting something older or lower end. As I mentioned before, I like the micro-ATX format, so taking all of that into consideration I picked the Asus Z87M-Plus.

It also sports 1x PCIe 3.0 x16, 1x PCIe 2.0 x16, and 2x PCIe 2.0 x1 slots. On the LAN side of the house it has the Realtek 8111GR Gigabit NIC, which ESXi 5.1 recognizes out of the box. (Note ESXi 5.5 needs a custom build, see end of this article.) This motherboard runs about $140.

Additional NICs

Even for a home ESXi server I like to use multiple NICs. Bandwidth generally isn’t a problem, but I like to fully test out VDS, NIOC, and other network features. My go-to add-in NIC is the SuperMicro AOC-SG-I1, which has two Intel NICs that ESXi immediately recognizes. With this add-in PCIe card the system will have a total of three NICs, which I find adequate for a home server.

Even for a home ESXi server I like to use multiple NICs. Bandwidth generally isn’t a problem, but I like to fully test out VDS, NIOC, and other network features. My go-to add-in NIC is the SuperMicro AOC-SG-I1, which has two Intel NICs that ESXi immediately recognizes. With this add-in PCIe card the system will have a total of three NICs, which I find adequate for a home server.

Storage

Normally I would not buy any local storage for my ESXi servers, and just rely on my iSCSI QNAP for all storage. However, VMware and other companies are coming out with great new technology that shares internal storage allows you to scale-out performance. VMware VSAN can take advantage of spinning piles of rust, and SSDs. Thus, I’m now populating all three of my ESXi hosts with a Western Digital 2TB Black drive ($159), and a 256GB Samsung 840 SSD ($180). I opted for the non-Pro Samsung, since this will just be for VSAN testing and don’t need the extra performance.

Case

Over the many iterations of my ESXi servers I’ve always used the Antec Minuet 350 slim case. Just as the name implies, the case is very slim and super compact. The PS is very quiet, and aesthetically looks very nice. So to match my other systems I’m sticking with the same case. I’m not worried about the overblown “Haswell” power supply issues, as I will have a couple of case fans that will negate any possible low power draw issues from the CPU.

Over the many iterations of my ESXi servers I’ve always used the Antec Minuet 350 slim case. Just as the name implies, the case is very slim and super compact. The PS is very quiet, and aesthetically looks very nice. So to match my other systems I’m sticking with the same case. I’m not worried about the overblown “Haswell” power supply issues, as I will have a couple of case fans that will negate any possible low power draw issues from the CPU.

Bill of Materials

Intel i5-4570s $199

G.Skill Ares 16GB Kit $126 x2

Asus Z87M-Plus $140

SuperMicro AOC-SG-i2 $93

Western Digital 2TB Black $159

Samsung 840 256GB $180

Antec Minuet 350 $89

$1112 Total or $773 without local storage

Final Thoughts

The price for my base ESXi server hasn’t changed much over the years, at about $800 with no storage. But the evolution of more CPU horsepower, lower power consumption, and built-in graphics are very nice indeed. I always load ESXi onto a cheap USB memory stick, so I just pick one up at my local electronics store. You really don’t need anything larger than 4-8GB.

I look forward to the upcoming beta of the VMware VSAN product, and also testing other new vSphere 5.5 features such as Flash Read Cache. To fully test out these new virtualization features and products, local SSD and spinning rust buckets are a must. Also remember that VSAN needs three hosts, so take that into account when sizing up your new lab.

Build Update

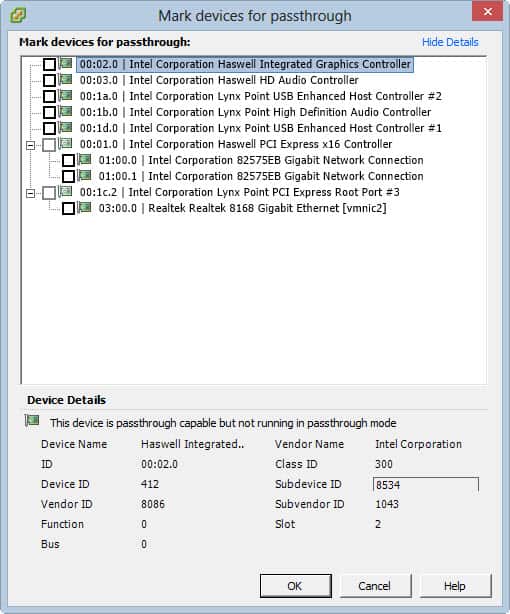

Now that I’ve built the “server” and got ESXi 5.1 up and running, I wanted to follow up with a few notes. Firstly, the motherboard does support VT-d, so you CAN pass through certain PCIe devices to a VM. Below is a screenshot of the available devices for passthrough.

I ran into some issues trying to get ESXi 5.1 installed to a USB key drive. ESXi 5.0 and later use GPT partitioning, even on drives less than 2TB. This works fine on my other Asus motherboards, but it appears there’s a BIOS bug that prevents that from working. No matter what I did, ESXi 5.1 would install on the USB drive just fine, but would then not boot. The solution is as follows:

1) Boot your server from the ESXi installation media (you can use a USB key or CD-ROM). If you want to boot your install media from USB, then download UNetbootin and use the diskimage option to write your ESXi installation ISO to the USB drive. BE SURE the USB drive is formatted as FAT, not NTFS. If it is NTFS, I would suggest using the diskpart “clean” option then re-partition and format as FAT to clear any NTFS remains.

2) During the boot process you will see a prompt in the lower left hand corner that says Shift-O to edit boot options. Press Shift-O.

3) Press the space bar and type formatwithmbr and press return. Install ESXi as you normally would to your USB key drive.

As with any server, make sure that Intel Virtualization extensions and VT-d are enabled in the BIOS. If you bought memory with XMP settings, then be sure to configure the BIOS to use the XMP settings for higher bus speeds.

vSphere 5.5 Update

VMware has removed many (all?) of the Realtek NIC drivers from the base vSphere 5.5 ESXi installation ISO. If you are running vSphere 5.1 and upgrading to 5.5 the NIC drivers will stay in place and continue to function. If you want to do a fresh install, there’s a great blog post on a simple way to create a custom ISO with the RealTek drivers here. Basically you use the image builder process to create a new ISO image. Its easy and just takes a few minutes.

Can you pass through the SATA controller?

I don’t think so… you cannot see it on the list… I am looking for any Z87 board which would allow to passthrough onboard SATA controller and I’ve found none so far.

SOme people reported ESXi 5,5 doesnt support Lynx point's SATA. Also, passthrough is completely broken for Intel SATA ports. Is that true??

Hey man!! I was trying to install ESXi 5.5 for all night and I read your post and add formatwithmbr parameter and it is done!!

Thank you so much!!!

You do not know how I suffered 😀

Nice write up, I have a very similar system (Z87M-Plus, HyperX, Seagate NAS, Pro/1000 PT dual port, v354) and have a couple of Q's I was hoping you could help with ..

– What boot settings / CSM compatibility mode settings do you use in the Z87M-Plus's BIOS ? I get the problem where sometimes on startup it doesn't boot VMWare and you need to reset the server.

– For USB passthrough which Lynx controller did you enable (#1 or #2) ? DId this effect the ESXi boot from USB ?

– Have you tried VGA pass through with the onboard or any particular card ?

Sorry for the shotgun of questions, but any advise would be greatly appreciated 🙂

Hi Derek, have you managed to get GA 5.5 U1 to run vSAN on this build? I have built my lab using very similar components to yours (2TB Seagate Barracuda's and different dual-port NIC's the exception). Unfortunately no go with vSAN, my problems seem to be the same as others are reporting with the GA code and onboard AHCI controller no longer supported:

http://www.yellow-bricks.com/2014/03/17/vsan-ahci…

As soon as you add a host to a vSAN enabled cluster, the servers the task times out and then the hosts will not boot, even the ESXi installer wont allow you to reinstall until you have blown away the vSAN created partitions (fdsik or similar) on both SSD and HDU.

No I haven't used VSAN, since I now work at Nutanix. But maybe a reader can reply with some insight.

Thanks for the post, I'm choosing between 3 mobo's:

z87 a

z87 plus

z87 m plus

now I know what to choose, z87 m plus is relatively cheap, but I think is a good for just a test environment built, now I can maximize my budget for 4×8 ram 🙂

I might also be able to add a SSD, thanks thanks thank you !!!!