The story I broke last week about impending licensing changes to vSphere 5.0 turned out to be spot on. In fact CRN published a story about the impending licensing changes and referenced my blog. Today VMware made an official announcement of changes, which you can find here. CRN just put out a story as well about the new changes you can read here.

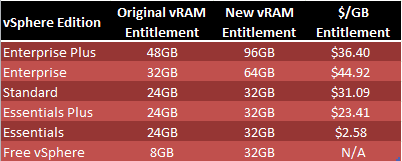

I’ve summarized the changes in the table below. As you can see, and what is a bit odd, is the $/GB cost of vSphere Enterprise edition. It’s actually more expensive than Enterprise Plus per vRAM GB if you look at it through a vRAM lens.

In addition to the vRAM entitlement changes, as I reported last week, the maximum vRAM entitlement per VM is capped at 96GB, even if the VM is the maximum 1TB in size. In addition, as I also mentioned before, a 12-month vRAM average calculation will be used to determine the amount of licenses you need, so that short lived spikes won’t cost you more money indefinitely.

What was not announced was any vRAM-only entitlement SKU, capping vRAM based on pRAM, cross-edition pooling of entitlements, or grandfathering of existing vSphere 4.x customers with additional licenses to cover existing capabilities. With VMware reporting record profits this year, some customers may still wonder why the need for such a complex licensing scheme that may still cost scale-up users more money to use existing hardware. It would almost make sense to kill the Enterprise SKU.

What I also found interesting is that on July 27th VMware announced licensing changes to their VSPP program, which is for cloud providers. You can read the full announcement here. Noteworthy is their movement AWAY from allocated vRAM (which is used for ‘regular’ vSphere 5.0 instances described above) to reserved vRAM with a 50% minimum floor of VM memory, with a cap of 24GB per VM. According to VMware “We now charge you for the physical memory reserved for the VM, allowing you to vary the memory oversubscription ratio according to the needs of the application and service level.” This more closely aligns VM memory usage to pRAM, although is not directly tied to the amount of pRAM your systems have.

VMware goes on to state:

Memory oversubscription works because many applications don’t use all the memory allocated to them, and this is compounded by application deployment guidelines for off-the-shelf applications that tend to over-estimate required memory. In addition, with VMs on the same host running identical copies of the same Operating System and/or application mean many memory pages are duplicates. Under vSphere, those VMs can share just one set of those identical memory pages, effectively “deduplicating” memory.

vSphere makes it possible to reserve less physical RAM for a VM without affecting performance, and has five main patented techniques to maximize memory oversubscription. Of course, it is possible to starve a VM of memory too, so there is a 50% reserved memory minimum (or floor, computed as the reserved RAM divided by allocated RAM). We chose this minimum on the advice of our engineering team. If you try to reserve less than 50% of allocated memory you will still be charged for a 50% reservation.

VMware makes an excellent case for why they are now changing their model from allocated vRAM to reserved vRAM. If this model is so great for cloud providers, and VMware bills vSphere as a cloud operating system, why isn’t this new vRAM model good enough for us enterprise users as well? VMware basically admits the allocated vRAM model was not optimal, yet now enterprise customers are forced to use it. It makes you wonder if the left hand is talking to the right hand inside VMware.

I am glad VMware listened to the significant uproar and very emotional customer input, and the revision will reduce price increases for customers. However, I think using their new VSPP model for vRAM tracking makes a lot more sense and would standardize their licensing scheme. I can’t imagine VMware making a second revision to their v5.0 licensing model. Maybe in 5.x or 6.x they will unify the vRAM models. But then how many customers will have started a migration to other hypervisors?

P.S. There are also some VERY noteworthy clarifications for non-View VDI users and those upgrading from previous ESX releases to vSphere 5.0. Check them out here.

Reserved vRAM is not the same as pRAM! pRAM is physical RAM per box. Reserved vRAM is just the amount of configured memory that is reserved for a VM. Totally different thing.

Yes, I very much agree. I clarified my pRAM statement to make it clearer, thanks.

I fat fingered my comment moderation and accidentally deleted one.

Steven J said:

You make a great point but most customers are unaware of the VSPP model and will only know what they have paid for already and what they will need to pay to upgrade or scale out.

All that said if they did bring the licensing models in line that would really play up the advantages of the memory smarts and make vSphere better value.

I can’t see many customers leaving with the new limits in place. With the increase I really dont think the costs will dramatically increase. You need to detach yourself from thinking about cost per host and think costs per VMs. To give an example, yesterday – 4 hosts (64gb each) 80 VMs. Today 1 host with 256gb could potentially run this and the licensing cost would be lower. old – 8 new – 3. Obviously this is an extreme example and could easily be 2 hosts with 128gb and so only 4 licenses the old way and of course you could run vsphere 4.1 on a 256gb host today and therefore achieve it with just 2 licenses (assuming 2 procs). Anyway point is there isnt going to be much of a difference, the model had to change and I think the new one is fair.

It’s really is starting to come down to “can VMware be trusted as a company”. They are getting a real history of selling software, selling maintenance on that software, and then changing the rules.

I pay maintenance to continue to maintain rights to use future versions of a product. I don’t expect those rights to be diluted as VMware sees fit by rule changes.

I paid a premium to get the top unrestricted version of ESX 3.5, Enterprise . They released Vsphere 4.0, and suddenly there’s an Enterprise Plus.

They release Vsphere 5 and change the rules once again. This time with a change that devalued my Plus licensing I’d been paying maintenance on by a factor of 3x (numerous large servers with overcommited memory).

I can’t think of a single other software company that works this way.

They’ve backed down this time due to public backlash, but I’m starting to seriously question whether this is a company I can trust moving forward?

>It’s really is starting to come down to “can VMware be trusted as a company”.

Agreed. When I first read of the licensing changes, I happened to be deploying a huge app on a large server cluster and decided to lose a weekend and invested it in installing and learning Proxmox (OpenVZ/KVM) as I needed a 100% open source solution to avoid ‘the man’ changing the rules because their CFO had some greed itch they had to scratch.

So far, I’m not looking back. App got deployed with significant performance increase and no real lose of capability over ESXi